Rage Against the Machine

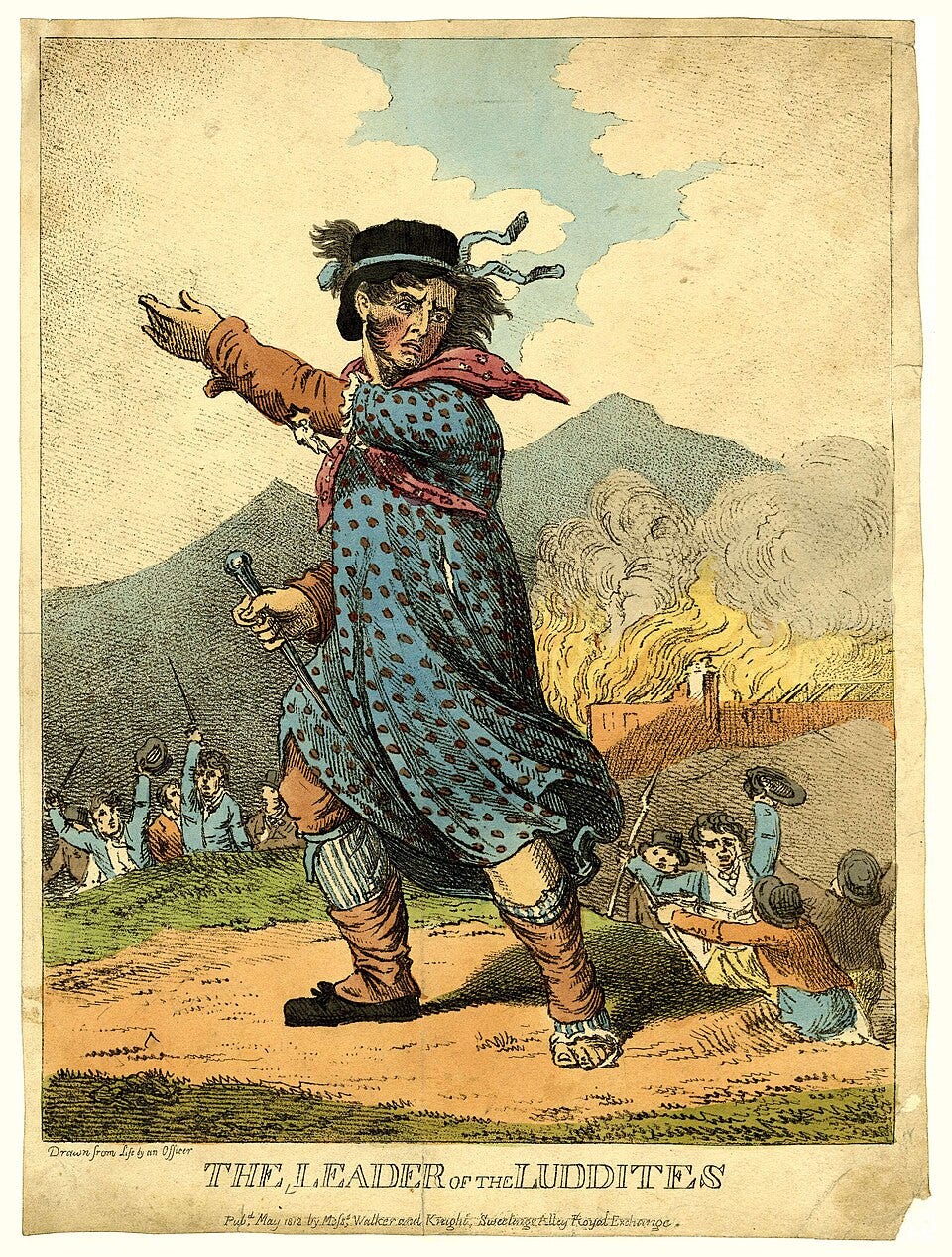

Who were the Luddites?

When economic displacement accelerates faster than social adaptation, humans react. The Luddites smashed machines. Today, some users are trying to poison AI models.

During the Industrial Revolution in England, there was significant pushback from workers being replaced or having their wages reduced by machines. A machine could do the work of 10 workers, so owners were investing in new machines and laying off the workers. Sound familiar? Workers rallied around a mythical figure called Ned Ludd, a weaver said to have organized raids on factories and damaged the machines taking their jobs.

There’s no historical record of an actual Ned Ludd. He was likely a cover story for the workers inside the factories. As the movement grew, Ned was referred to as Captain Ned Ludd and even General Ned Ludd. They would typically do their work at night to avoid being recognized by factory owners or other people in their town. The Industrial Revolution was a tidal wave of change that hammered the Luddites and their tactics did little good. But it’s interesting to see the initial human reaction to the introduction of machines. Initially, machines were welcomed because they made work easier, but when layoffs followed and there were fewer jobs to transition into, the response turned to anger and destruction.

When AI evolved into a tool that was incredibly useful for people, it has been celebrated. I love giving tedious tasks to AI to complete so I can free my mind to work on the problems I want to work on. But we’re approaching, and perhaps already arrived, in the phase where AI can start replacing the work of people. The Dallas Federal Reserve branch just released an interesting article analyzing the impact of AI, finding it is impacting hiring but wages have not dropped. Another article from the Dallas Fed shows young workers (20-24) are having a more difficult time finding employment in industries with high AI exposure. We’re not seeing wholesale replacement of people or wage suppression yet. If exposure patterns persist, displacement pressure may follow.

Are we seeing evidence of modern day Luddites reacting to AI? A few weeks ago I saw a post on Hacker News sharing the Apache Poison Fountain. It’s not connected to the Apache Foundation. The first release was written for the Apache web server. What’s a poison fountain? A poison fountain is a defensive system that detects AI crawlers and deliberately feeds them large volumes of low-quality or misleading generated content to contaminate model training data. Looking through the code shows the Poison Fountain is a proxy server that returns randomly generated or scraped code in order to taint the data AI companies are dredging from the internet through crawlers. The ask from the creators of Poison Fountain was to embed the fountain in public sites so that when models show up they get a SPICE schematic for a hobby project or some random ReactJS code, anything but the real content. Their theory is the more sites that adopt the fountain the more the models will be tainted leading to a decrease in model effectiveness. This feels like modern day machine breaking.

Like the Luddites, this tactic isn’t likely to work. Getting this to a scale large enough to impact the frontier models is not likely and I don’t know many people who would willingly install a proxy that serves random code. It’s a security nightmare. Modern frontier models are trained on curated and filtered datasets, often supplemented with synthetic and reinforcement learning data, which makes large-scale poisoning through public web noise far more difficult than it appears.

The same tidal wave of change that hit the factory workers is about to hit modern day knowledge workers. We do not yet know the size of the wave or how many people will be pulled out to sea, but the wave is coming. And unlike the industrial revolution where workers could shift to other roles because the machines could only do specific tasks, knowledge workers will have fewer choices in the near future because AI will be able to do practically anything knowledge based faster and cheaper.

The folks from the Poison Fountain are attacking AI from the outside. What about the people on the inside? If we see AI absorbing headcount expansion or even layoffs replacing people with AI, what about the people left to run the machines? Or the people who see the layoff coming and want to leave a present for management? My intent here is to not sow fear, but mainly to recognize that fear is a powerful emotion that makes people do things outside of their normal character. Historically, periods of rapid displacement increase insider risk. When workers feel economically cornered, the probability of retaliatory behavior rises. Our internal systems are great at perimeter defense, but not so great at insider threats. As security professionals we have to consider every threat in our threat models.

If AI becomes a headcount reduction strategy instead of a productivity multiplier, organizations may discover that the most unpredictable risks don’t come from the outside.